In February of this year, the New York Times hosted the ‘New Work Summit', gathering together some of the greatest minds globally to “…assess the opportunities and risks that are now emerging as artificial intelligence accelerates its transformation across industries.” Exciting stuff!

As a follow-up, they published an excellent article, ‘Seeking Ground Rules for A.I.' with some of the conference results. (You can read the full version here.)

“How should we, as a society, deal with these issues? [A.I.] Many have asked the question. Not everyone can agree on the answers,” writes Cade Metz. “Google sees things differently from Microsoft. A few thousand Google employees see things differently from Google. The Pentagon has its own vantage point.”

Despite the conflict of opinions, the NYT managed to collate a list of recommendations from the conference attendees – these are listed below.

- Transparency: Companies should be transparent about the design, intention and use of their A.I. technology

- Disclosure: Companies should clearly disclose to users what data is being collected and how it is being used

- Privacy: Users should be able to easily opt out of data collection

- Diversity: A.I. technology should be developed by inherently diverse teams

- Bias: Companies should strive to avoid bias in A.I. by drawing on diverse data sets.

- Trust: Organizations should have internal processes to self-regulate the misuse of A.I. Have a chief ethics officer, ethics board, etc.

- Accountability: There should be a common set of standards by which companies are held accountable for the use and impact of their A.I. technology

- Collective governance: Companies should work together to self-regulate the industry.

- Regulation: Companies should work with regulators to develop appropriate laws to govern the use of A.I.

- “Complementarity”: Treat A.I. as tool for humans to use, not a replacement for human work.”

Excellent food for thought.

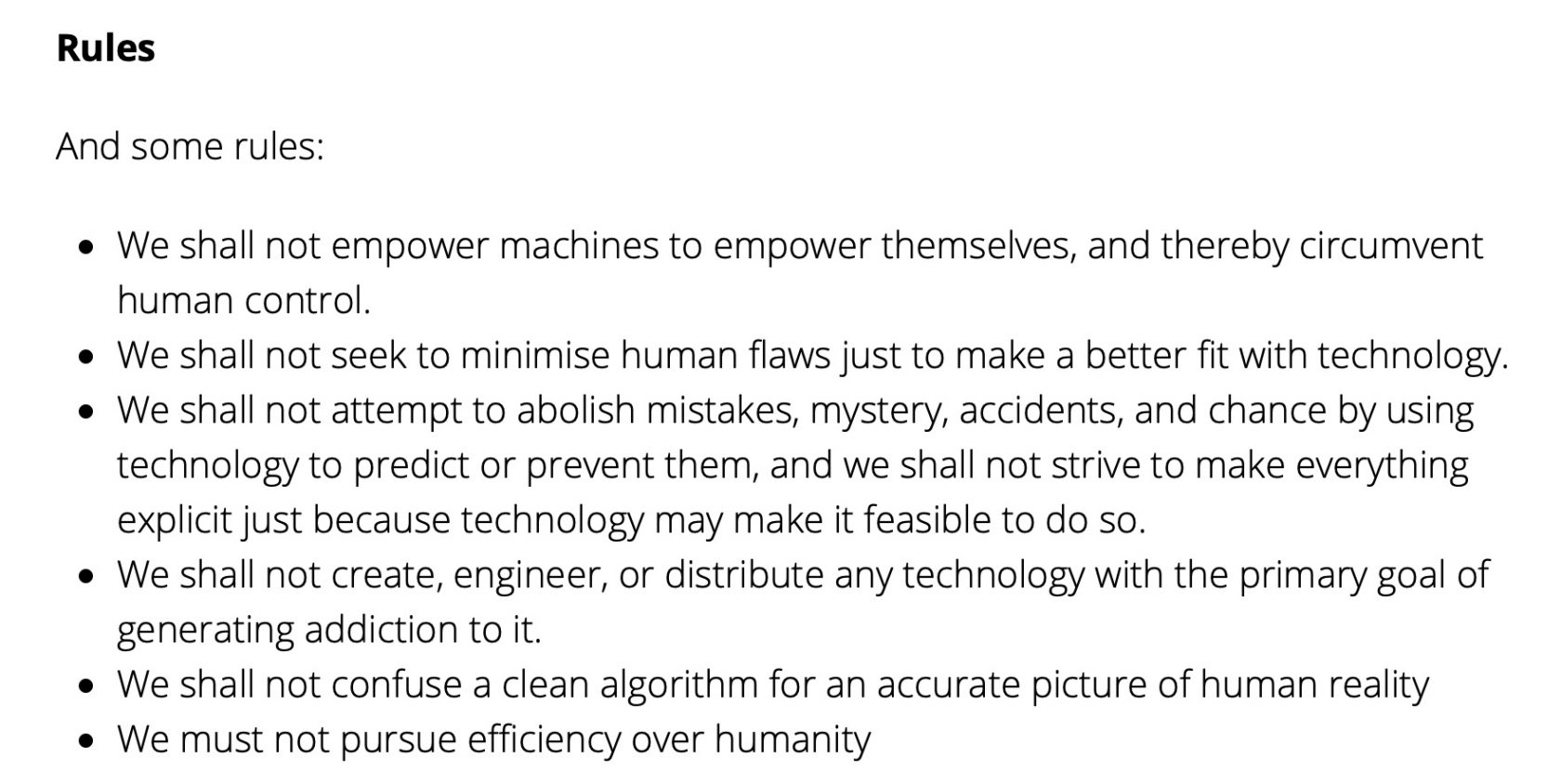

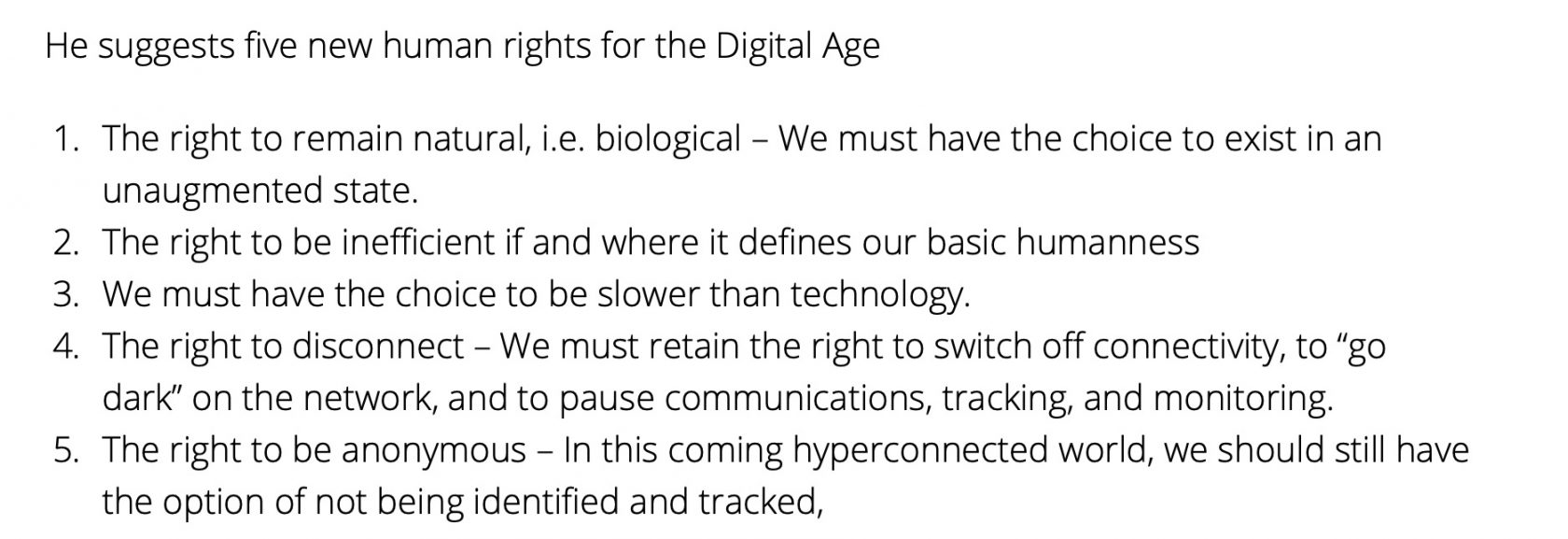

If this interests you, take a look at my five new human rights for the Digital Age. I cover off similar themes, that are explored in more depth in my book, Technology Vs Humanity.

Human flourishing at the centre!