Have a look at these 2 stories, below. The first story, by Ted Chiang and via the New Yorker, is super-relevant to the current GenAI debate, and the burning question of wether the rise of AI will indeed turbo-charge widespread technological unemployment.

For those that don't read longer posts, here is my main learning from those 2 pieces: We really need to stop using technological progress to double down on the outmoded objective of increasing GDP i.e. on attaining ever more growth and profit, or we will use our great scientific and technological achievements in the worst possible way i.e. to create an increasingly toxic and dysfunctional society. Or maybe the recent, meteoric rise of AI will actually turbo-charge the switch to the new paradigm based on the 4Ps: People Planet Purpose and Prosperity…?

Buckminster Fuller, a futurist that has been a very big influence on my work for quite some time now, said: “Humanity is acquiring all the right technology for all the wrong reasons” – approx. 50 years ago!

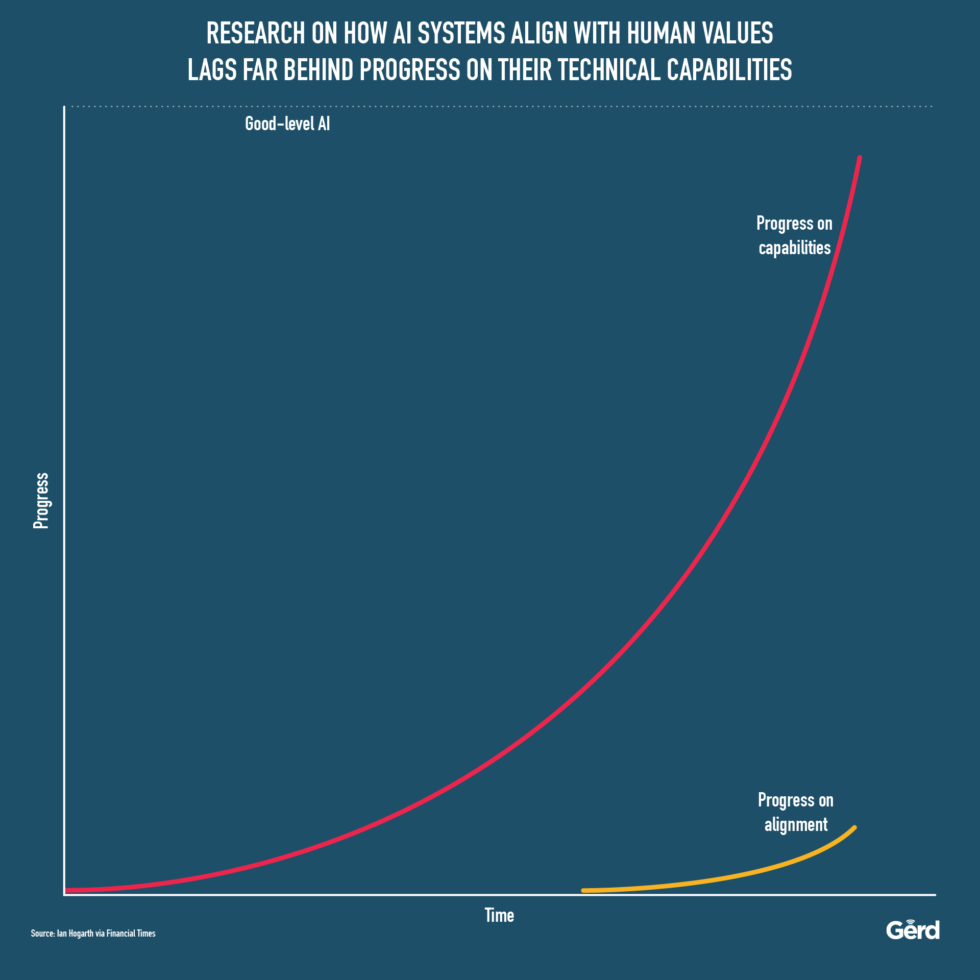

“The computer scientist Stuart Russell has cited the parable of King Midas, who demanded that everything he touched turn into gold, to illustrate the dangers of an A.I. doing what you tell it to do instead of what you want it to do” (aka The Alignment Problem) *from the New Yorker piece.

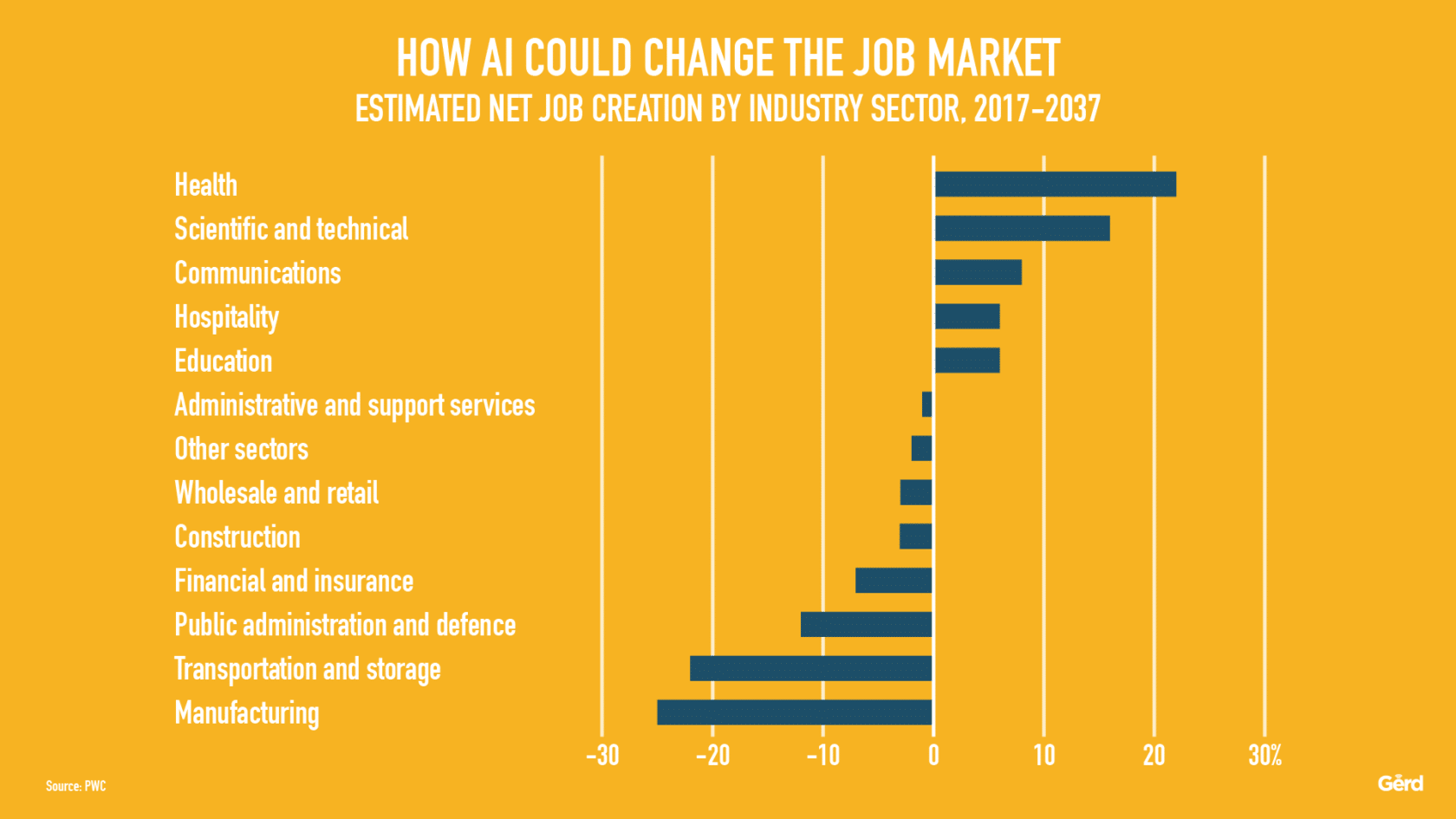

No matter what you think about McKinsey (and consulting firms in general) and their alleged role as “capital’s willing executioners”, these are really valid arguments: AI is very likely to be used to generate more corporate profit at ever lower headcount – while the remaining humans becoming subject to enormous pressure to compete with smart machines (or generally, to comply). Dehumanisation is a huge temptation here.

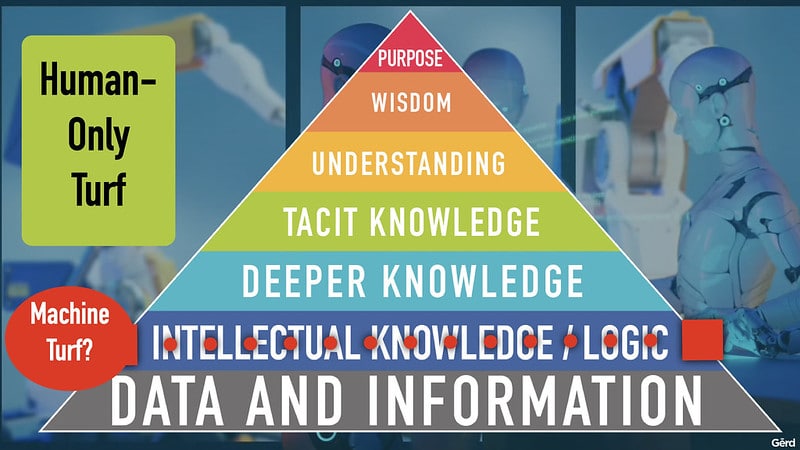

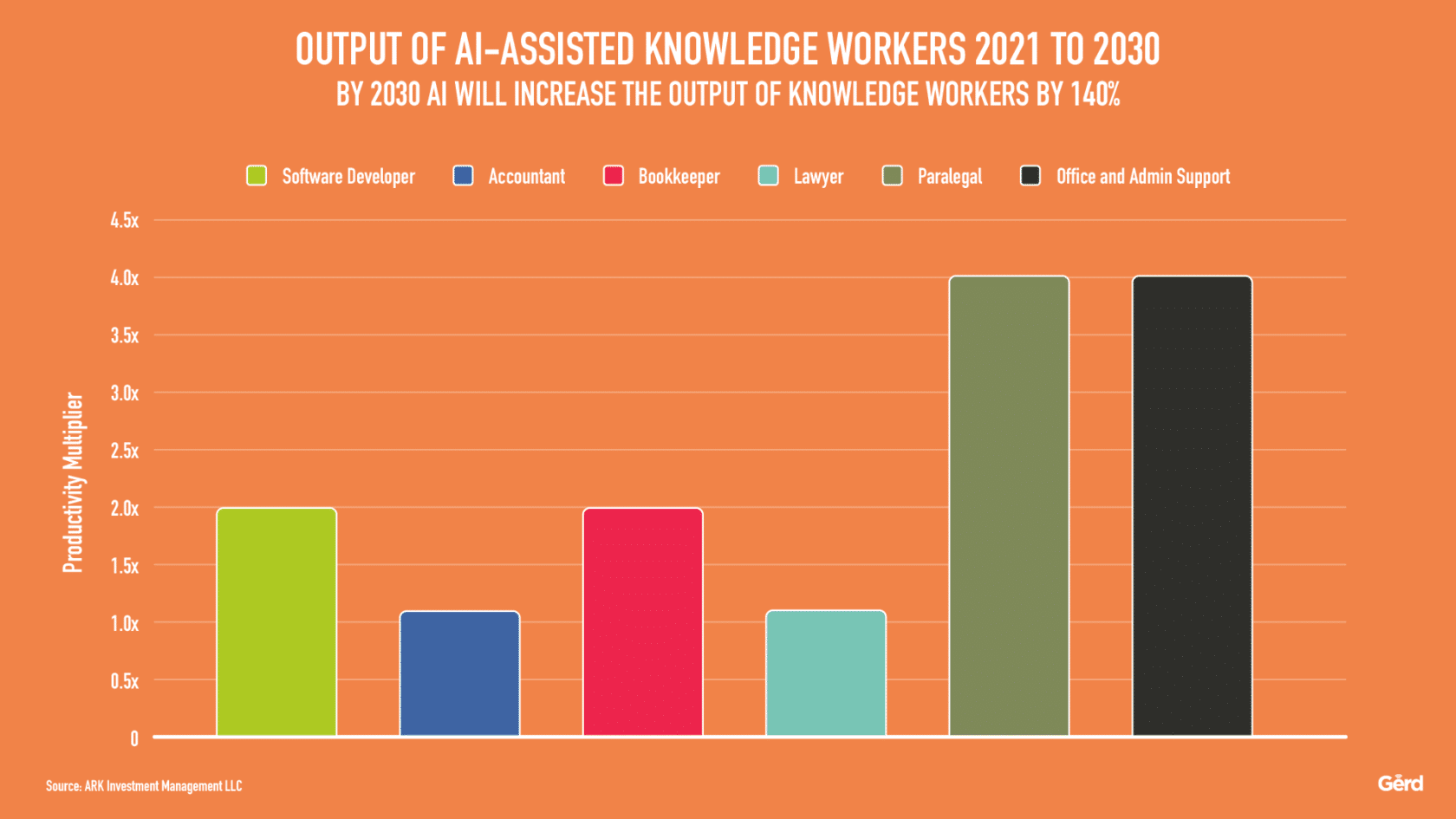

Yet my hunch (and hope) is that might be the same story as we witnessed in social media: At first, everyone argued that large enterprises can save huge amounts of marketing money because of social media – but it ended up being an entirely new cost factor. The same will probably apply to AI: it may look like enterprises could automate-away a lot of current human routines (and therefore big chunks of jobs), using AI (or rather, IA, as I keep saying) and thus have less people (armed with AI) doing faster and better work – but the reality might just be that we're just moving up the food-chain towards more human-only work. At least… that would be my hope.

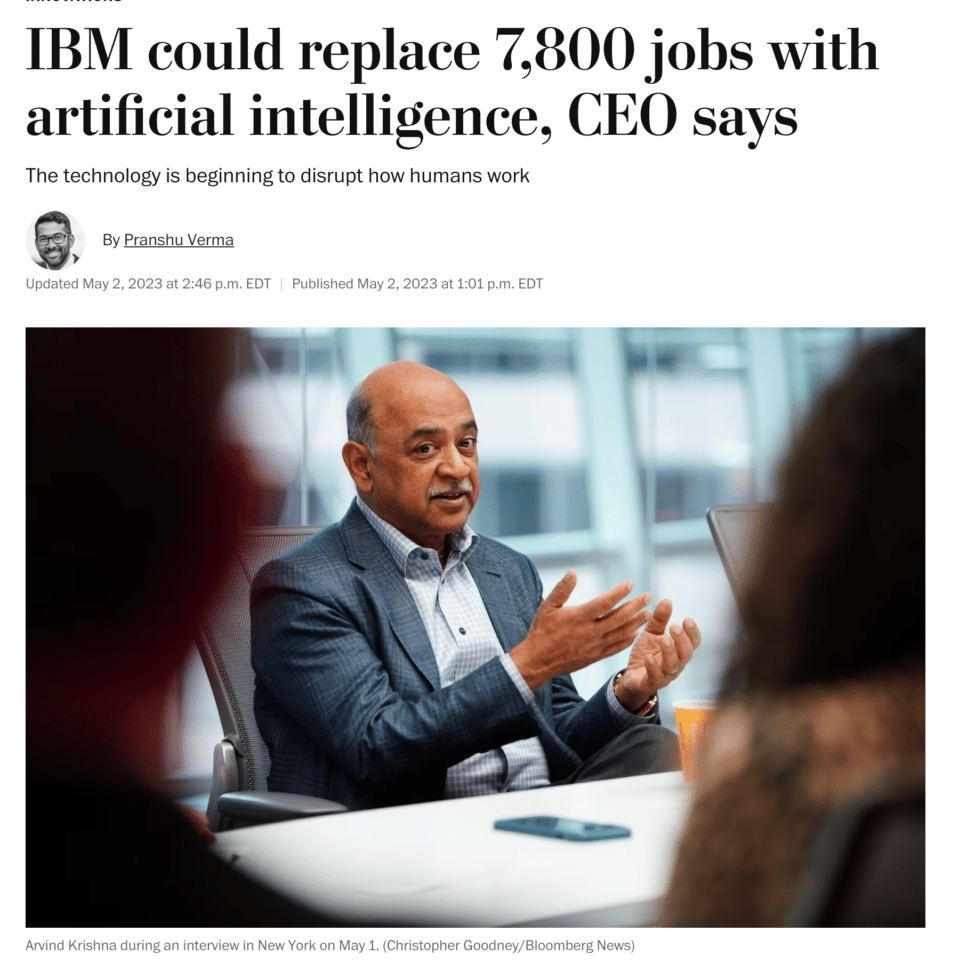

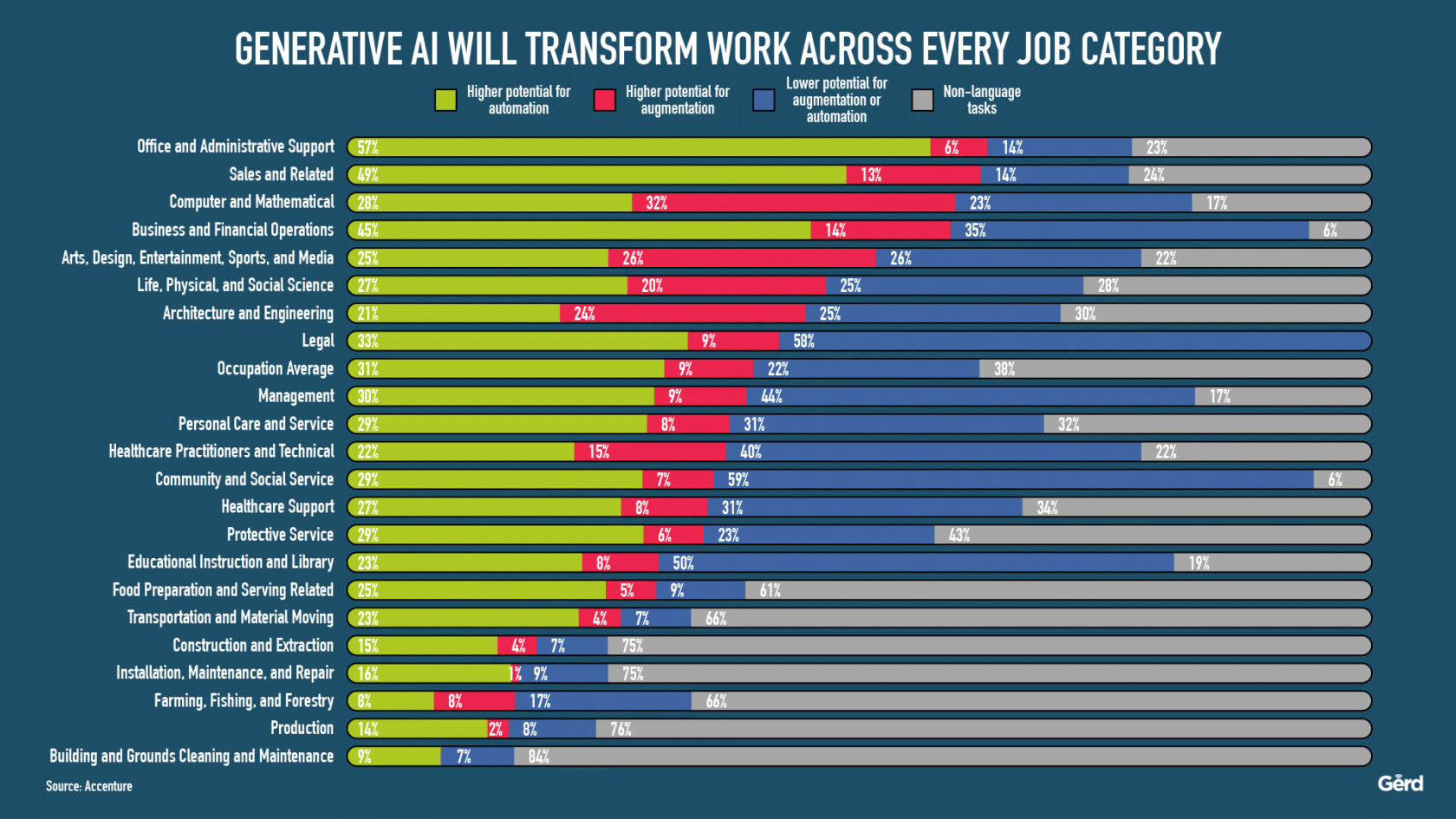

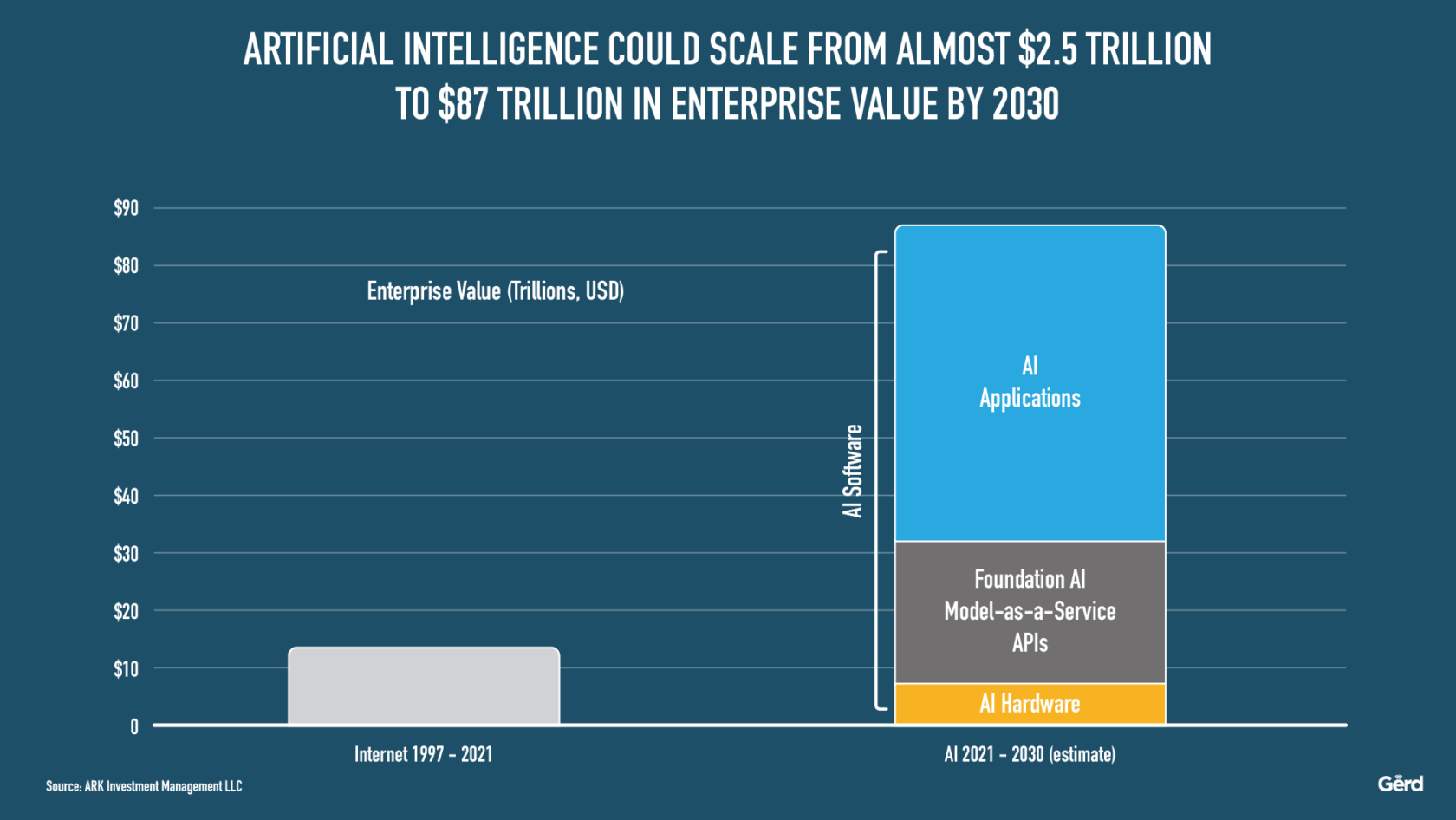

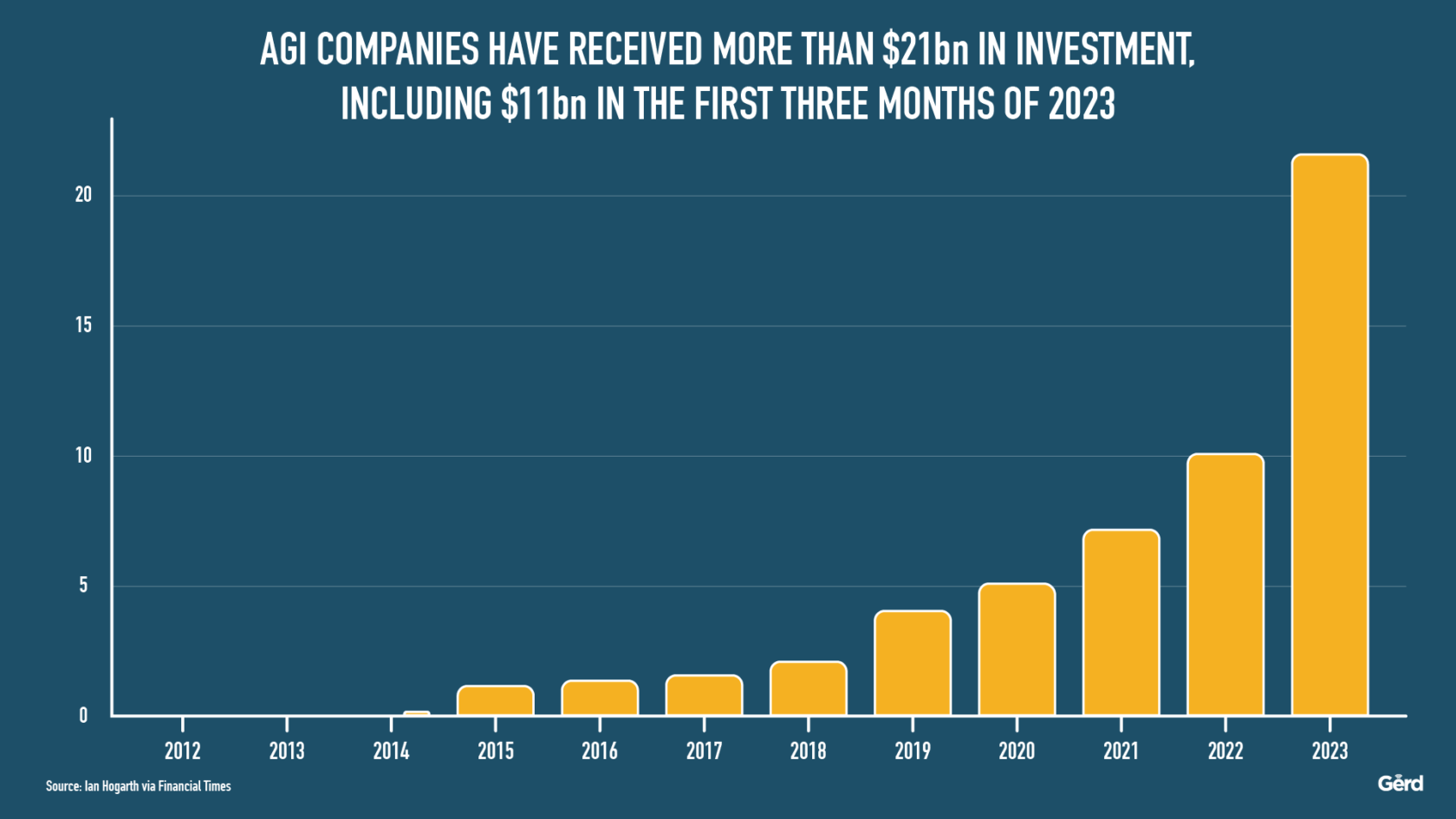

Some supporting stats and illustrations below:

Here are some juicy quotes via Ted Chiang and The New Yorker (use this archive.ph link if you have paywall issues):

“Just as A.I. promises to offer managers a cheap replacement for human workers, so McKinsey and similar firms helped normalize the practice of mass layoffs as a way of increasing stock prices and executive compensation, contributing to the destruction of the middle class in America… A former McKinsey employee has described the company as “capital’s willing executioners”: if you want something done but don’t want to get your hands dirty, McKinsey will do it for you. That escape from accountability is one of the most valuable services that management consultancies provide… Even in its current rudimentary form, A.I. has become a way for a company to evade responsibility by saying that it’s just doing what “the algorithm” says, even though it was the company that commissioned the algorithm in the first place.

“If you think of A.I. as a broad set of technologies being marketed to companies to help them cut their costs, the question becomes: how do we keep those technologies from working as “capital’s willing executioners”

if you imagine A.I. as a semi-autonomous software program that solves problems that humans ask it to solve, the question is then: how do we prevent that software from assisting corporations in ways that make people’s lives worse?

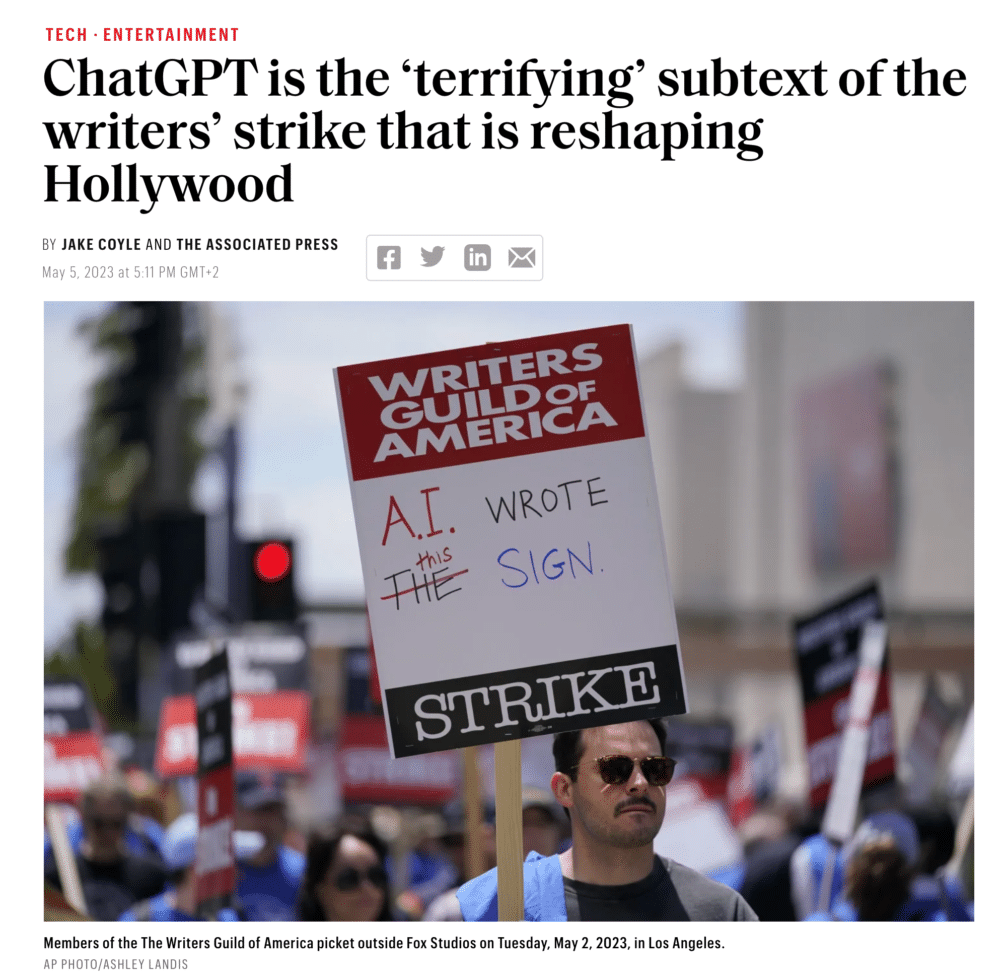

And here is the Via Motherboard / VICE piece on the writers strike in Hollywood – outlining very much the same problem: the great promises of amazing technologies are turned into a tool of reductionism and profit optimisation. When will we ever learn?

“Over the negotiation, it became clear that the AI proposals are really part of a larger pattern. The studios would love to treat writers as gig workers. They want to be able to hire us for a day at a time, one draft at a time, and get rid of us as quickly as possible. I think they see AI as another way to do that,” John August, a screenwriter known for writing the films Charlie’s Angles and Charlie and the Chocolate Factory“

“The WGA says it’s imperative that ‘source material’ can’t be something generated by an AI, either. This is especially important because studios frequently hire writers to adapt source material (like a novel, an article, or other IP) into new work to be produced as TV or films,” August added. “It’s very easy to imagine a situation in which a studio uses AI to generate ideas or drafts, claims those ideas are ‘source material,’ and hires a writer to polish it up for a lower rate.” The immediate fear of AI isn’t that us writers will have our work replaced by artificially generated content. It’s that we will be underpaid to rewrite that trash into something we could have done better from the start. This is what the WGA is opposing and the studios want,” C. Robert Cargill”